Introducing the Exoscale Network Load Balancer

Antoine Coetsier

Antoine CoetsierToday we are happy to make our Network Load Balancer service (NLB) generally available.

NLB allows users to provision a scalable and highly available transport-level L4 (TCP/UDP) load balancer that can be used to distribute incoming traffic to compute instances.

The service launches in all Exoscale zones globally and comes with the following features:

- Seamless integration with Instance Pools

- Advanced health check of pool members via TCP or HTTP

- Choice of load balancing strategies: Round Robin, Source Hash

- Preservation of source IP for comprehensive logs

- Maximum throughput via direct packet return from the instance serving the request

Differences Between Elastic IP (EIP) and Network Load Balancer (NLB)

The Network Load Balancer service launches on top of the existing EIP feature. It complements the network capabilities of the compute stack with a better option for high availability architectures, and enhances platform scalability furthermore.

Here is a quick summary of how the products compare, and their best use cases:

| Feature | EIP | NLB |

|---|---|---|

| Visibility | Makes 1 external IP assignable to multiple instances | Makes 1 external IP assignable to multiple groups of instances |

| Granularity | Sends ALL IP traffic to 1 or multiple instances | Sends selected port traffic to a selected Instance Pool |

| Balancing capabilities | Unweighted Round Robin | True Round Robin or Source Hash |

| Health checks | Optional, non observable | Observable, fine grained |

| Best for | Principal/secondary failovers (e.g. Database clusters) | Active/active architectures |

Benefits of the Exoscale Network Load Balancer

While Load Balancers can provide great benefits in designing high availability architectures, not all load balancers are implemented the same.

One of the features that makes Exoscale NLB stand out is its very high throughput. This is achieved by leveraging the integration of the NLB within our own SDN - Software Defined Network - that makes the routing logic distributed across the infrastructure, instead of channeling traffic through a single or even clustered point.

One of the key elements to high throughput, is that the traffic for the Exoscale NLB follows a direct return path:

- Client request reaches NLB IP address

- NLB selects the pool member for the request

- NLB forwards the request to the selected member via the SDN

- The destination node receives and processes the request

- Destination node sends reply back

- SDN directly forwards reply back to client instead of NLB

Here is an example benchmark scenario:

- We’ve set up 5 clients located in SOF1

- The clients hold 500 workers (with 500 different source ports) that generate up to 2500 concurrent connections

- Requests are sent to a dummy application served by 2 NGINX servers deployed on an Instance Pool located in MUC1

- Using the NLB provided 96.32 % of the throughput available with a direct EIP connection using Source Hash strategy, and 99.27 % using Round Robin.

| Method | 90th percentile | 95th percentile |

|---|---|---|

| EIP | 46.089 ms | 43.836 ms |

| NLB with Round Robin | 47.047 ms | 44.158 ms |

| NLB with Source Hash | 49.2 ms | 45.45 ms |

Simple Pricing

Exoscale NLB launches with a simple pricing structure that makes it affordable to use in comparison to a self hosted approach. It is also extremely predictable, with no hidden costs or complicated calculation vs most other cloud providers:

| Item | Exoscale Pricing | Competition |

|---|---|---|

| NLB | CHF 25.- / month USD 25 / month EUR 22.73 / month | Fixed fee |

| Requests | Included | Pay per request or session |

| Health checks | Included | Pay per check |

| Active sessions | Included | quota or additional charge |

| Traffic | no overcharge | additional fee |

Get Started!

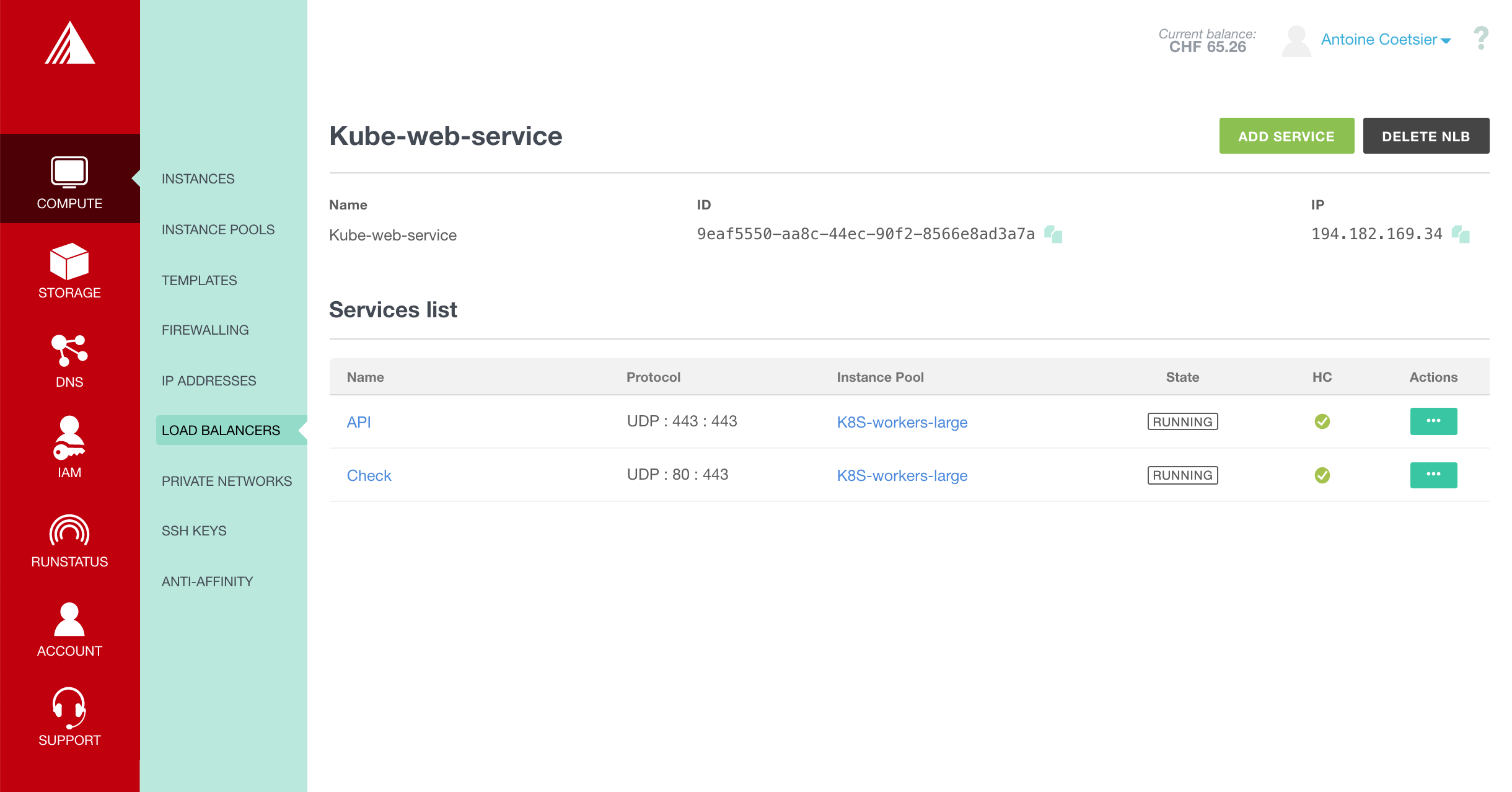

You can start to use NLB today through our web application, CLI, and integrations like Terraform.

Read on to discover how to launch a complete load balanced setup using simple steps with the Exoscale CLI or jump directly to production with the NLB documentation.

We would like to thank our customers and partners that have been part of the early adopter program these last months. They have provided great feedback on the feature set and usability of the product.

We will continue adding support for additional tools and integrations, notably with a native Kubernetes cloud controller manager to be released next month.