There has been a lot of talk around Apache Mesos lately, and the last DevOpsCH Meetup in Switzerland was the perfect opportunity to find out more.

Reliability

The main talk was given by Tobias Weingartner who is a senior SRE (Site Reliability Engineer) at Twitter, specifically in charge of the Mesos clusters in production and development environments at Twitter. Stuffed with a lot of anecdotes about the run of such a production, the sizes that Tobias related about defy comprehension. The bottom line is that Mesos has improved reliability at Twitter so much that not running an application on the Mesos clusters requires a ton of approval and validation from application owners!

Greatly interested about the capacities of Mesos and the hints of its very large use at Twitter, I set about running a small test of the Aurora + Mesos combo.

Setup

While the main talk was about Mesos, Mesos actually does not work out of the box and requires a scheduler for launching tasks. Apache Aurora is one of the 2 schedulers supported right now. As Tobias specified, there is nothing preventing a custom integration with Mesos directly but that would require a much longer blog post!

cloning the repo

Clone the repo from Github, this might change as the project is in the Apache incubation process:

git clone https://github.com/apache/incubator-aurora.git

cd incubator-aurora

Build the project

You will need to build the project with Tar deliverables as the deliverables

are then synced with vagrant to the boxes.

You can do this with ./gradlew distTar command in the main directory. Refer to Aurora documentation

if this fails on your environment. Building secondary artifacts might be needed to.

The best inspiration for this is simply the provision-dev-environment.sh script in

the example/vagrant/ directory.

Vagrant

The Aurora project comes bundled with an example Vagrantfile template. This template will enable us to launch the following 6 instances:

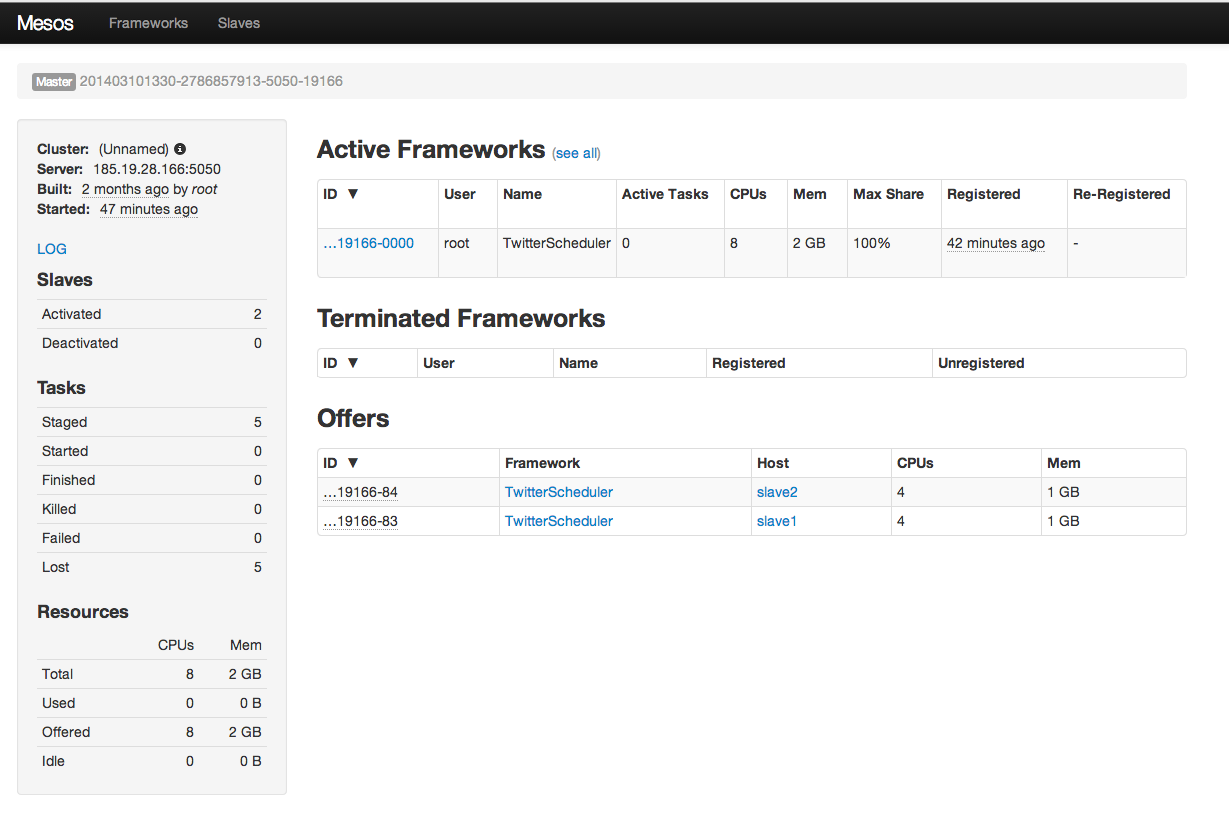

- Mesos master: its role is to dispatch jobs to the slaves. In production it is recommended to run at least 3.

- Mesos Slave 1 and 2: These are the actual workhorses that run the jobs. Each job is launched in a Linux container on standard Ubuntu Precises instances.

- Zookeeper: the famous http distributed coordination engine. It is used to distribute the traffic to the right slave. In fact no DNS service can update quickly enough to keep up with a ever-adapting Mesos cluster.

- Aurora scheduler: This service will schedule and register jobs to the Mesos Master. In production, running more than 1 is highly recommended too.

- Devtools: this instance contains the tools to run the demo, such as Thermos.

vagrant Exoscale

As this little setup requires 6 instances, it makes sense to launch those away from my laptop. Memory already occupied with browsers tabs.

Switching from the standard Virtualbox configuration of this Vagrantfile to Exoscale requires only minor modifications. Check out this previous article for full details on Vagrant-exoscale

vagrant hostmanager

In order to have all those machines communicating together on a public cloud we need to replace private IP addresses in the Vagrantfile and also on the provisioning scripts.

We will be using a Vagrant plugin called vagrant-hostmanager that is capable of managing the hosts files of all boxes in the setup.

This is done by installing the plugin in your setup:

vagrant plugin install vagrant-hostmanager

and adding the following to the Vagrantfile:

config.hostmanager.enabled = true

in order to enable the service.

To make things easier build on this repo and just add your APIKEY and SECRETKEY.

git clone https://github.com/retrack/incubator-aurora --branch exoscale

cd incubator-aurora

cp Vagrantfile Vagrantfile.local && mv Vagrantfile.exoscale Vagrantfile

vi Vagrantfile

Replace the 3 required fields:

- APIKEY

- SECRETKEY

- SSHKEY name and path

Most importantly, it will also update your local /etc/hosts file giving you nice

names to play with.

Running the cluster

With this long introduction over, time to launch the cluster. Simply issue in the modified Vagrantfile directory:

vagrant up --provider=cloudstack --no-parallel

Note: we explicitly notify Vagrant to deploy instances sequentially in order to avoid dependency conflicts, e.g.: slave present before the master, …

Connect to the interfaces

There are 3 interfaces that you can connect to:

- Aurora: http://aurora:8081/scheduler/

- Mesos: http://master:5050/

- Observer: http://devtools:1338/

Launch a Job

To actually do stuff with this cluster system, log on to Aurora:

vagrant ssh aurora-scheduler

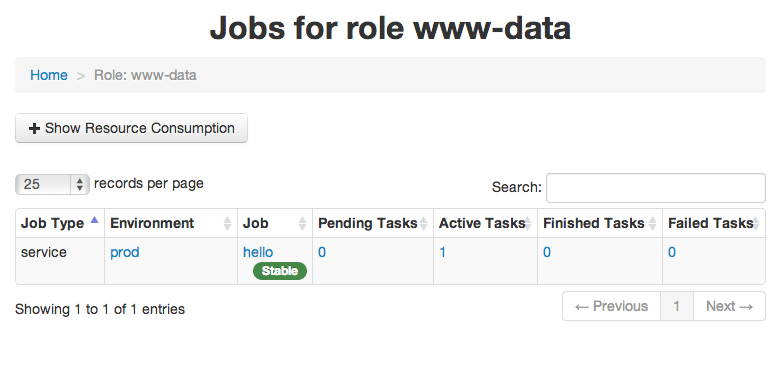

Then launch a sample “Hello World” job:

aurora create example/www-data/prod/hello /vagrant/examples/jobs/hello_world.aurora

Check the job is running in the aurora interface:

To be continued

Now that the infrastructure is in place, playing with the features of Mesos and the Aurora scheduler will come soon. In the meantime, check Aurora’s user guide

There should be also a much nicer a reliable way to deploy those few components than relying on Shell script. Maybe leveraging Puppet of Chef capabilities into Vagrant would do the trick.